Description

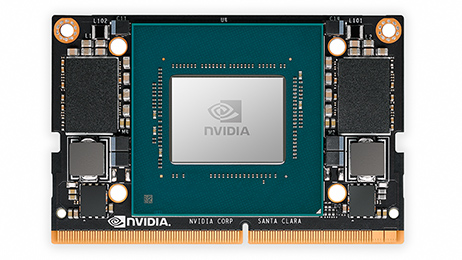

NVIDIA® Jetson Xavier ™ NX brings supercomputer-like performance to the edge of networks in a compact system-on-a-module (SoM). With a raw power of 21 TOPs for accelerated computing workflows, it allows you to parallelize modern neural networks and process the data streams collected by multiple high-resolution sensors, which is a fundamental requirement for state-of-the-art AI systems.

ALL THE PERFORMANCE OF NVIDIA XAVIER IN A COMPACT FORMAT

With its ultra-compact dimensions of just 70mm x 45mm, Jetson Xavier NX delivers performance equivalent to that of the NVIDIA Xavier SoC in a performance module the size of Jetson Nano ™. This compact and powerful module combines exceptional performance with unprecedented energy efficiency, while offering a full range of input and output (I / O) options including both high-speed CSI and PCIe interfaces as well as connectors GPIO and I2C at lower speed. Take advantage of a small footprint module, optimized sensor interfaces and phenomenal compute performance to implement unprecedented AI capabilities on embedded and edge systems.

UP TO 21 TOPS OF RAW POWER FOR AI

Jetson Xavier NX delivers up to 21 TOPS of raw power, making it the ideal solution for AI and high-performance computing workflows for embedded and edge systems. Experience unparalleled performance with 384 NVIDIA CUDA® cores, 48 Tensor cores, 6 Carmel ARM CPUs, and 2 NVDLA acceleration engines for deep learning. This high-performance module also offers memory bandwidth of up to 51 GB / s and advanced video encoding and decoding capabilities. All these features make Xavier NX the platform of choice for running modern neural networks in parallel and simultaneously processing high-resolution data streams collected by multiple sensors.

EXCEPTIONAL ENERGY EFFICIENCY

Jetson Xavier NX supports multiple power modes, including low power modes for battery operated systems, and delivers up to 14 TOPs of processing power for AI applications with power consumption not exceeding 10 W. This gives you enough power to power your sensors and peripherals in parallel, while using the entire NVIDIA software stack. This level of performance allows you to run modern AI networks and frameworks with GPU-accelerated libraries for deep learning, computer vision, computer graphics, multimedia and more.

Reviews

There are no reviews yet.